We rely on online portals more than ever before for banking, healthcare or government support. In-person support is becoming rarer, so navigating these digital spaces is growing harder to avoid and more important to master.

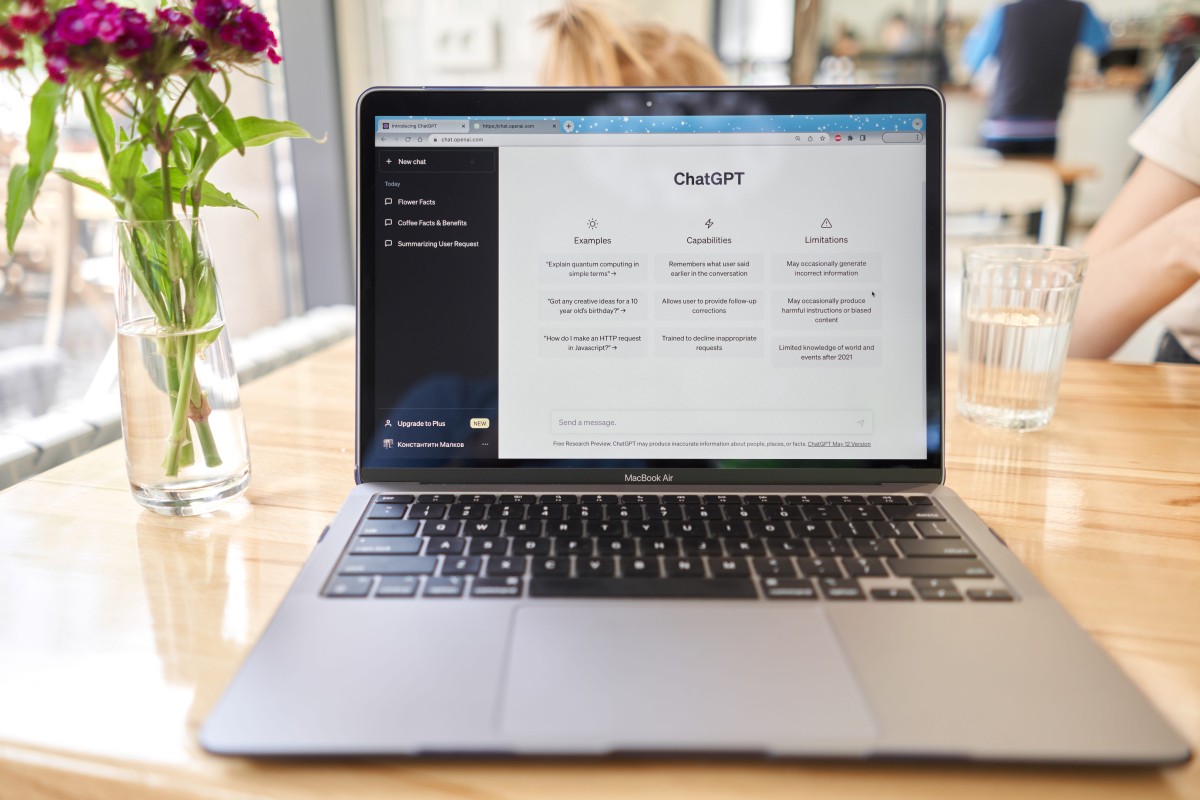

One research team has co-designed an AI-powered chatbot to guide older adults through digital portals and access essential services. The team says properly designed technology can help older people feel safe, confident and included online – not just able to use technology, but comfortable in doing so.

The study identified the limits of current ‘digitally inclusive’ design. They proposed a new concept: socially inclusive design. “Socially inclusive design asks, does this technology help people feel they belong, that they can act independently, and that any concerns about safety are taken seriously,” says Dr Jade Brooks, IT lecturer at the University of Auckland’s business school.

The study drew on interviews with seniors, volunteer caseworkers and staff of the partner organisation. “The chatbot is intended to complement and, in some cases, relieve caseworkers’ workload by guiding seniors step-by-step through online tasks, while also helping build skills and confidence over time.”

“We programmed supportive, reassuring, and adaptive settings that allow seniors to build confidence over time, enabling independent digital interactions,” says associate professor Dr Yenni Tim from the University of New South Wales.

“We also provided the system with positive feedback mechanisms and community-building features that encourage seniors to share experiences and develop a sense of belonging within its digital environments.”

Knowing how to engage with AI safely and confidently is just as important as learning to use the internet itself. AI can be helpful, but requires a level of awareness and judgment that many users are still developing.

AI systems are designed to generate responses based on patterns in data, not lived experience or true understanding. This means they can be useful for guidance, explanations and support, but they are not always correct.

Treating AI as a helpful assistant rather than an authority figure is a good baseline. Verifying important information really matters, especially when it relates to finances, health or law.

Beyond knowing what AI is and isn’t, privacy is another key consideration. Many AI tools rely on user input to generate responses, which means the information shared can be stored or used to improve systems.

Avoid entering sensitive personal details such as bank information, passwords, addresses or medical records unless you are certain the platform is secure and designed for that purpose. When in doubt, keep interactions general and anonymous.

It is also worth paying attention to how AI communicates. Well-designed systems should be transparent about what they are, how they work, and where their limitations lie.

If a tool presents itself as human, avoids clarifying its capabilities, or pressures users into making quick decisions, that’s a red flag. Sensible navigation includes recognising when something doesn’t feel right and stepping back.

Another practical habit is to use AI as a starting point, not the final step. For example, an AI chatbot might help draft an email, explain a concept, or guide someone through an online form.

But reviewing, editing and cross-checking that output ensures it truly meets your needs. This not only reduces risk but also builds confidence and skill over time.

Building confidence with AI can grow through repeated use. Taking time to explore AI tools in low-pressure situations can help build comfort.

Support from family members, community groups or digital literacy programmes can also reinforce safe habits and provide reassurance.

Importantly, sensible AI use is not about avoiding the technology altogether. It is about engaging with it on your own terms. That includes setting boundaries, asking questions, and feeling empowered to stop using a tool if it doesn’t meet your expectations.

Keep your safety front of mind and remain independent. AI can be a valuable companion but don’t think of it as infallible. Technology should adapt to people, not the other way around.